接上文

找到接口之后连续查看了几个图片,结果发现图片都很小,于是用手机下载了一个用wireshark查看了一下url

之前接口的是

imges_min下载的时候变成了images

soga,知道之后立马试了一下

果然有效,

但是总不能一个一个的查看下载吧

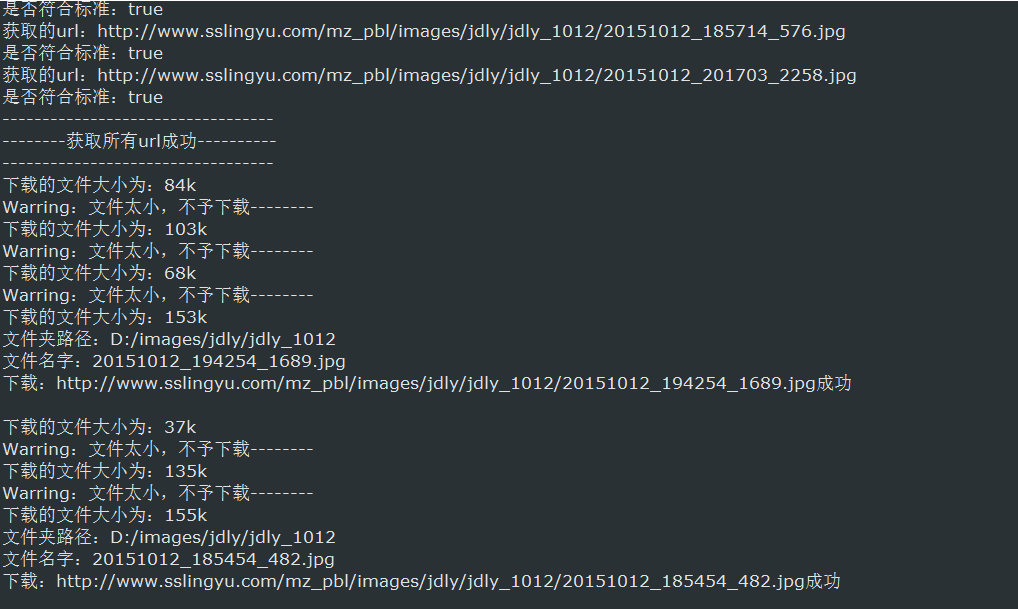

于是连夜写了个java爬虫

下面是代码

packagecom.feng.main; importjava.io.File; importjava.io.FileOutputStream; importjava.io.IOException; importjava.io.InputStream; importjava.io.InputStreamReader; importjava.util.ArrayList; importjava.util.Date; importjava.util.List; importjava.util.regex.Matcher; importjava.util.regex.Pattern; importorg.apache.http.HttpEntity; importorg.apache.http.HttpResponse; importorg.apache.http.client.HttpClient; importorg.apache.http.client.config.RequestConfig; importorg.apache.http.client.methods.HttpGet; importorg.apache.http.impl.client.HttpClients; public classDownLoadImg { List<String> imgFormat = new ArrayList<String>(); DownLoadImg(){ imgFormat.add("jpg"); imgFormat.add("jpeg"); imgFormat.add("png"); imgFormat.add("gif"); imgFormat.add("bmp"); } /*** 开启总方法 * @paramstartUrl */ public voidstart(String startUrl){ String content =getContent(startUrl); //获取所有图片链接 List<String> urls =getAllImageUrls(content); for (int i = 0; i < urls.size(); i++) { downloadImage(urls.get(i)); } System.out.println("----------------------------------"); System.out.println("------------下载成功-------------"); System.out.println("----------------------------------"); } /*** 获取HttpEntity * @returnHttpEntity网页实体 */ privateHttpEntity getHttpEntity(String url){ HttpResponse response = null;//创建请求响应 //创建httpclient对象 HttpClient httpClient =HttpClients.createDefault(); HttpGet get = newHttpGet(url); RequestConfig requestConfig =RequestConfig.custom() .setSocketTimeout(5000) //设置请求超时时间 .setConnectionRequestTimeout(5000) //设置传输超时时间 .build(); get.setConfig(requestConfig);//设置请求的参数 // try{ response =httpClient.execute(get); } catch(IOException e) { //TODO Auto-generated catch block e.printStackTrace(); } //获取返回状态 200为响应成功 //StatusLine state = response.getStatusLine(); //获取网页实体 HttpEntity httpEntity =response.getEntity(); returnhttpEntity; //try { //return httpEntity.getContent(); //} catch (IllegalStateException | IOException e) { // //TODO Auto-generated catch block //e.printStackTrace(); //} //return null; } /*** 获取整个html以String形式输出 * @paramurl * @return */ privateString getContent(String url){ HttpEntity httpEntity =getHttpEntity(url); String content = ""; try{ InputStream is =httpEntity.getContent(); InputStreamReader isr = newInputStreamReader(is); char[] c = new char[1024]; int l = 0; while((l = isr.read(c)) != -1){ content += new String(c,0,l); } isr.close(); is.close(); } catch (IllegalStateException |IOException e) { //TODO Auto-generated catch block e.printStackTrace(); } returncontent; } /*** 通过开始时的content获取所有图片的地址 * @paramstartUrl * @return */ private List<String>getAllImageUrls(String content){ String regex = "http://([\w-]+\.)+[\w-]+(/[\w-./?%&=]*)?(.jpg|.mp4|.rmvb|.png|.mkv|.gif|.bmp|.jpeg|.flv|.avi|.asf|.rm|.wmv)+"; //String regex = "http://www.sslingyu.com/mz_pbl/images_min\S*\.jpg"; Pattern p =Pattern.compile(regex); Matcher m =p.matcher(content); List<String> urls = new ArrayList<String>(); while(m.find()){ String url =m.group(); //将获取到的url转换成高清 url =getHDImageUrl(url); System.out.println("获取的url:"+url+" 是否符合标准:"+isTrueUrl(url)); if(isTrueUrl(url)){ urls.add(url); } } System.out.println("----------------------------------"); System.out.println("--------获取所有url成功----------"); System.out.println("----------------------------------"); returnurls; } /*** 下载图片 * @paramurl * @paramis */ private intdownloadImage(String url){ try{ HttpEntity httpEntity =getHttpEntity(url); long len = httpEntity.getContentLength()/1024; System.out.println("下载的文件大小为:"+len+"k"); if(len < 150){ System.out.println("Warring:文件太小,不予下载--------"); return 0; } String realPath =getRealPath(url); String name =getName(url); System.out.println("文件夹路径:"+realPath); System.out.println("文件名字:"+name); InputStream is =httpEntity.getContent(); //此方法不行 //System.out.println(is.available()/1024+"k"); int l = 0; byte[] b = new byte[1024]; FileOutputStream fos = new FileOutputStream(new File(realPath+"/"+name)); while((l = is.read(b)) != -1){ fos.write(b, 0, l); } fos.flush(); fos.close(); is.close(); System.out.println("下载:"+url+"成功 "); }catch(Exception e){ System.out.println("下载:"+url+"失败"); e.printStackTrace(); } return 1; } /*** 创建并把存储的位置返回回去 * @paramurl * @return */ privateString getRealPath(String url){ Pattern p = Pattern.compile("images/[a-z]+/[a-z_0-9]+"); Matcher m =p.matcher(url); String format = getName(url).split("\.")[1]; String path = null; //说明是图片 if(imgFormat.contains(format)){ path = "media/images/"; }else{ path = "media/video/"; } path += url.split("/")[(url.split("/").length-2)]; if(m.find()){ path =m.group(); }; //添加盘符 path = "D:/"+path; File file = newFile(path); if(!file.exists()){ file.mkdirs(); } returnpath; } /*** 获取文件名 * @paramurl * @return */ privateString getName(String url){ //s3.substring(s3.lastIndexOf("/")+1) return url.substring(url.lastIndexOf("/")+1); } /*** 获取高清图片地址 * 就是把images_min换成了Images * @paramurl * @return */ privateString getHDImageUrl(String url){ if(url.contains("images_min")){ return url.replace("images_min", "images"); } returnurl; } /*** 判断url的格式是否正确,必须以http开头,以.jpg结尾 * @paramurl * @return */ private booleanisTrueUrl(String url){ return url.matches("^http://([\w-]+\.)+[\w-]+(/[\w-./?%&=]*)?(.jpg|.mp4|.rmvb|.png|.mkv|.gif|.bmp|.jpeg|.flv|.avi|.asf|.rm|.wmv)+$"); } }

上面是下载部分,下面是主函数

packagecom.feng.main; public classMainTest { public static voidmain(String[] args) { DownLoadImg down = newDownLoadImg(); String startUrl = "http://www.example.com"; down.start(startUrl); } }

整个爬虫的功能是爬去整个网页(单个网页)中所有图片与视频,

通过正则获取网页中的url,而且还特地增加了将images_min换成images的方法。

换成接口链接开始运行!!!

等了几十分钟下完之后打开文件夹

很好很好,下载完成。

《未完待续》